Yeah! Yeah! Yeah! Louis is back. Do you remember him from Altavista? Qwiki has taken the top prize at TechCrunch Disrupt San Francisco. Check it out the demo is quite compelling, I subscribed the alpha service.

Now I want to see Fuzzy Mauldin, back in action

Random commentary about Machine Learning, BigData, Spark, Deep Learning, C++, STL, Boost, Perl, Python, Algorithms, Problem Solving and Web Search

Thursday, September 30, 2010

Facebook 2nd Video site in U.S. and they do not host videos?

Comscore for video is out. Facebook is the second one, but they do not host video. Yahoo! is not longer the second one in the category

Tuesday, September 28, 2010

TechCrunch editor is tired and he sold to AOL

I am a big fan of TechCruch whose posting I read voraciously. I am not sure that I was happy when I read this:

"The truth is I was tired. But I wasn’t tired of writing, or speaking at events. I was tired of our endless tech problems, our inability to find enough talented engineers who wanted to work, ultimately, on blog and CrunchBase software. And when we did find those engineers, as we so often did, how to keep them happy. Unlike most startups in Silicon Valley, the center of attention at TechCrunch is squarely on the writers. It’s certainly not an engineering driven company.

AOL of course fixes that problem perfectly. They run the largest blogging network in the world and if we sold to them we’d never have to worry about tech issues again. We could focus our engineering resources on higher end things and I, for one, could spend more of my day writing and a lot less time dealing with other stuff."

So they sold to AOL

"The truth is I was tired. But I wasn’t tired of writing, or speaking at events. I was tired of our endless tech problems, our inability to find enough talented engineers who wanted to work, ultimately, on blog and CrunchBase software. And when we did find those engineers, as we so often did, how to keep them happy. Unlike most startups in Silicon Valley, the center of attention at TechCrunch is squarely on the writers. It’s certainly not an engineering driven company.

AOL of course fixes that problem perfectly. They run the largest blogging network in the world and if we sold to them we’d never have to worry about tech issues again. We could focus our engineering resources on higher end things and I, for one, could spend more of my day writing and a lot less time dealing with other stuff."

So they sold to AOL

Got a(nother) patent for Video Hover Preview

Hmm.. today is a really strange day since I discovered that my ex-Ask.com Pisa group received another patent assigment.

This is time is about Video Search. Back in 2005, we patented the idea of Video Hover preview. These days, Video Hover is a quite popular features among many video sites.

Abstract: A method and system to preview video content. The system comprises an access component to receive a search request and a loader to simultaneously stream a plurality of videos associated with the search request. The system may further comprise a trigger to detect a pointer positioned over a first video and a mode selector to provide the first video in a preview mode.

Inventors:

Gulli; Antonino (Pisa, IT)

Savona; Antonio (Sora, IT)

Veri; Mario (Rocca San Giovanni, IT)

Yet another achievement for Pisa, the "nowhere place"

This is time is about Video Search. Back in 2005, we patented the idea of Video Hover preview. These days, Video Hover is a quite popular features among many video sites.

Abstract: A method and system to preview video content. The system comprises an access component to receive a search request and a loader to simultaneously stream a plurality of videos associated with the search request. The system may further comprise a trigger to detect a pointer positioned over a first video and a mode selector to provide the first video in a preview mode.

Inventors:

Gulli; Antonino (Pisa, IT)

Savona; Antonio (Sora, IT)

Veri; Mario (Rocca San Giovanni, IT)

Yet another achievement for Pisa, the "nowhere place"

Monday, September 27, 2010

Peter Thiel: Facebook Won’t IPO Until 2012 At The Earliest

Quoting Techcrunch: "Facebook’s first investor Peter Thiel told Fox Business

told Fox Business today that Facebook will not IPO until 2012. Earlier this summer, Bloomberg reported that Facebook was holding off on its IPO for another two years and Thiel seems to confirm this line of thinking.

today that Facebook will not IPO until 2012. Earlier this summer, Bloomberg reported that Facebook was holding off on its IPO for another two years and Thiel seems to confirm this line of thinking.

told Fox Business

told Fox Business today that Facebook will not IPO until 2012. Earlier this summer, Bloomberg reported that Facebook was holding off on its IPO for another two years and Thiel seems to confirm this line of thinking.

today that Facebook will not IPO until 2012. Earlier this summer, Bloomberg reported that Facebook was holding off on its IPO for another two years and Thiel seems to confirm this line of thinking. Thiel says that Facebook wants to follow the example of Google, and doesn’t want to IPO until late in the process (which he claims is a byproduct of Sarbanes Oxley and other regulation)."

Sunday, September 26, 2010

Fishes in a lake

There is an unknown number of fishes in a lake and you need to estimate them. You start fishing and you capture 50 fishes, then you mark them all with a special pen and throw them back in the water. You decide to start fishing again and you recapture 2 marked fishes. So how many fishes there are in the lake?

Saturday, September 25, 2010

Got a patent for Image Search

So I got a patent. It's a quite long process. Started back in Sept 2005 and endend in Sept 2010.

Abstract: "A system and method for determining if a set of images in a large collection of images are near duplicates allows for improved management and retrieval of images. Images are processed, image signatures are generated for each image in the set of images, and the generated image signatures are compared. Detecting similarity between images can be used to cluster and rank images."

Please note that the patent has been submitted before the classical paper PageRank for Product Image Search (2008) from Google, which presented similar ideas.

The algorithms described in the patent were used in Ask.com Image Search, which had the following judgement by PC-World 2007 "Returned very accurate image results, and it has a well-designed search results page. This is a worthy alternative to Google"

Inventors are:

Gulli'; Antonino (Pisa, IT)

Savona; Antonio (Sora, IT)

Yang; Tao (Santa Barbara, CA)

Liu; Xin (Piscataway, NJ)

Li; Beitao (Piscataway, NJ)

Choksi; Ankur (Somerset, NJ)

Tanganelli; Filippo (Castiglioncello, IT)

Carnevale; Luigi (Pisa, IT)

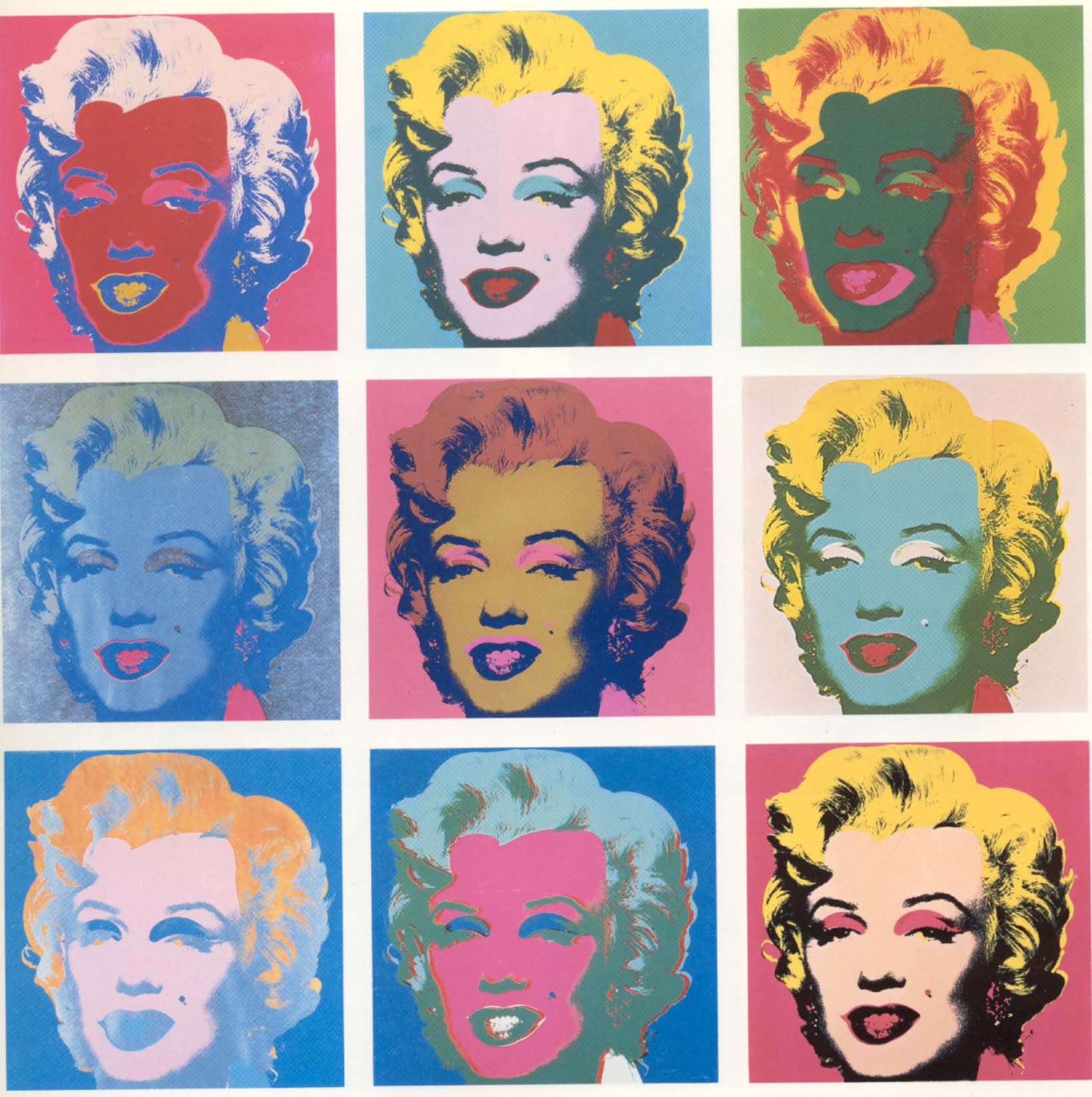

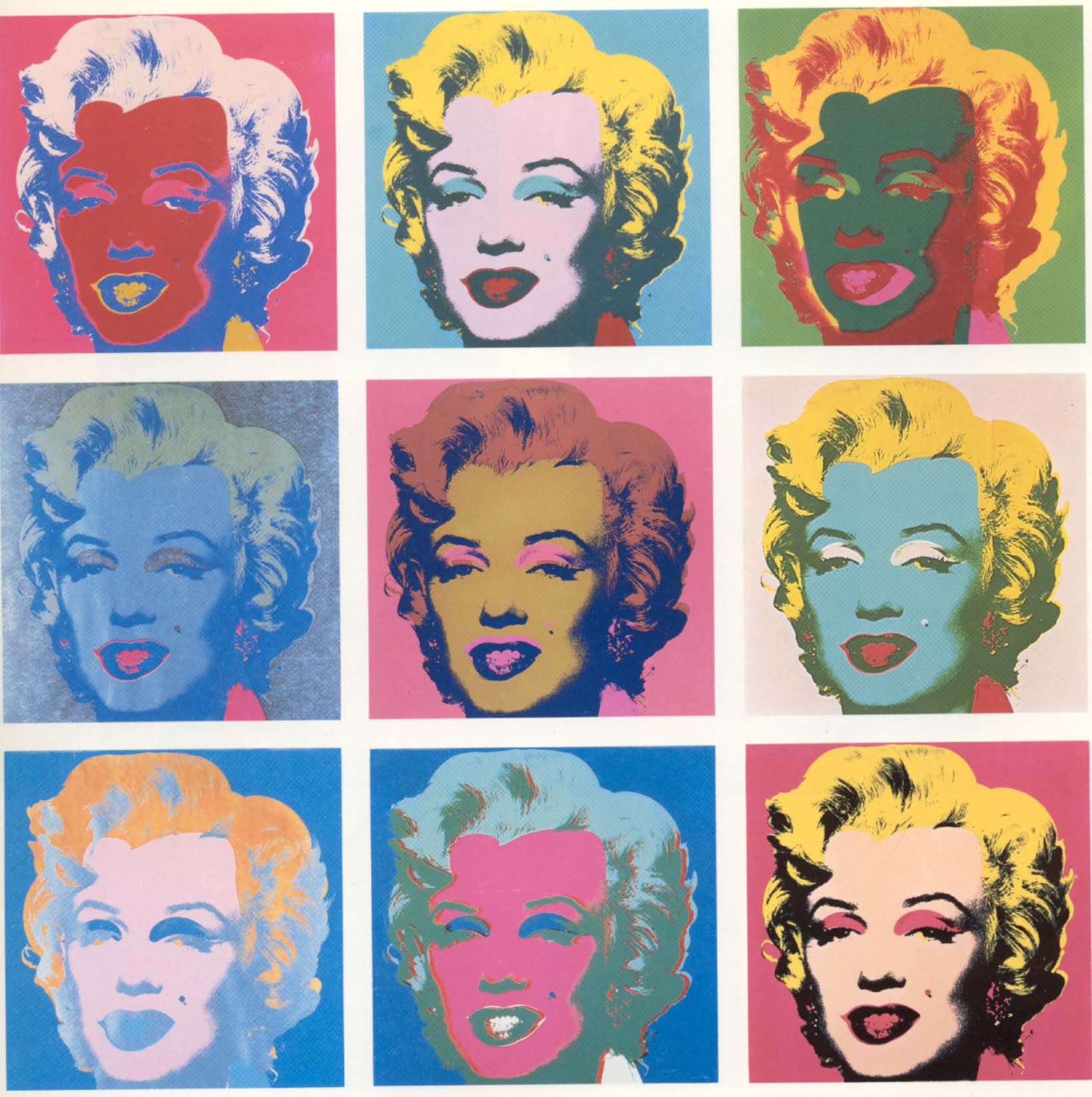

This idea has been ispired by a quite famous Andy Warhol painting

I am particularly proud of this patent, since it proves that innovation can be carried out even from Pisa, a "nowhere place in Italy" ;-). Bet won!

Abstract: "A system and method for determining if a set of images in a large collection of images are near duplicates allows for improved management and retrieval of images. Images are processed, image signatures are generated for each image in the set of images, and the generated image signatures are compared. Detecting similarity between images can be used to cluster and rank images."

Please note that the patent has been submitted before the classical paper PageRank for Product Image Search (2008) from Google, which presented similar ideas.

The algorithms described in the patent were used in Ask.com Image Search, which had the following judgement by PC-World 2007 "Returned very accurate image results, and it has a well-designed search results page. This is a worthy alternative to Google"

Inventors are:

Gulli'; Antonino (Pisa, IT)

Savona; Antonio (Sora, IT)

Yang; Tao (Santa Barbara, CA)

Liu; Xin (Piscataway, NJ)

Li; Beitao (Piscataway, NJ)

Choksi; Ankur (Somerset, NJ)

Tanganelli; Filippo (Castiglioncello, IT)

Carnevale; Luigi (Pisa, IT)

This idea has been ispired by a quite famous Andy Warhol painting

I am particularly proud of this patent, since it proves that innovation can be carried out even from Pisa, a "nowhere place in Italy" ;-). Bet won!

Friday, September 24, 2010

Ensemble Methods in Data Mining: Improving Accuracy Through Combining Prediction

A very interesting reading and the PDF is free: "Ensemble methods have been called the most influential development in Data Mining and Machine Learning in the past decade. They combine multiple models into one usually more accurate than the best of its components. Ensembles can provide a critical boost to industrial challenges -- from investment timing to drug discovery, and fraud detection to recommendation systems -- where predictive accuracy is more vital than model interpretability. Ensembles are useful with all modeling algorithms, but this book focuses on decision trees to explain them most clearly. After describing trees and their strengths and weaknesses, the authors provide an overview of regularization -- today understood to be a key reason for the superior performance of modern ensembling algorithms. The book continues with a clear description of two recent developments: Importance Sampling (IS) and Rule Ensembles (RE). IS reveals classic ensemble methods -- bagging, random forests, and boosting -- to be special cases of a single algorithm, thereby showing how to improve their accuracy and speed. REs are linear rule models derived from decision tree ensembles. They are the most interpretable version of ensembles, which is essential to applications such as credit scoring and fault diagnosis. Lastly, the authors explain the paradox of how ensembles achieve greater accuracy on new data despite their (apparently much greater) complexity."

Thursday, September 23, 2010

Facebook down -- Internet is crying

So it happens... When you are a little baby and you are about to become a kid. You need to make your own mistakes and sometime you need to fall down. Nothing wrong with it. Your mistakes will make you an adult.

So it happens.. Facebook went down for 2.5hours. My friend Nick wonders whether this increased the productivity in all the world ...

Apparently, the problem was generated by an automatic system used to maintain consistency in cache. Now Facebook is an adult, like Google, Bing, and Yahoo. All of them went down

Wednesday, September 22, 2010

Find the majority

I used this question in my interviews (medium-hard). You have a very long array of integers can you find the int that appears the majority of times with no additional memory and in less than linear time?

Tuesday, September 21, 2010

Random generation

Given a random generator function rand04() that generates random numbers between 0 and 4 (i.e., 0,1,2,3,4) with equal probability. Write a random genartor rand07() that generates numbers between 0 to 7 (0,1,2,3,4,5,6,7) with equal probability.

Monday, September 20, 2010

Maximum contiguous sum in an array

Given an array of integers find the contiguos sequence with maximum sum

Your Personal Ads is waiting you

A dear friend of mine pointed out the work of ImmersiveLabs on targeted ads. Their experience made me formalize some random thoughts I had in my mind for a long period.

Last century was the age of massive and unpersonalized ads on TV, radio, and newspapers.

Ten years ago we saw the beginning of personalized ads with Google. The model was simple and therefore a huge revolution. You search what you want, I give you the results but we agree that I can use your query for serving targeted ads. Actually, the model was proposed originally by Goto.com and Lycos but they missed to understand the value .. and this is another story.

A couple of years ago we started to see another use of personalization. Again the model is simple. You are in a given place and access a service from there (either with your phone or with your desktop). I provide you the service, but will use your geo-location for serving local ads.

These are the days of multiple sensors. ImmersiveLabs is using a cam to detect your face and your sex. Your Iphone can send information about your walking style or your attitude. Your game console can send information about your training style or the what do you like to play. Your tv will soon send information about your entertainment needs. Facebook knows every web page you Like.

How many other ad sensors are there out in the world?

Gianni!!

Last century was the age of massive and unpersonalized ads on TV, radio, and newspapers.

Ten years ago we saw the beginning of personalized ads with Google. The model was simple and therefore a huge revolution. You search what you want, I give you the results but we agree that I can use your query for serving targeted ads. Actually, the model was proposed originally by Goto.com and Lycos but they missed to understand the value .. and this is another story.

A couple of years ago we started to see another use of personalization. Again the model is simple. You are in a given place and access a service from there (either with your phone or with your desktop). I provide you the service, but will use your geo-location for serving local ads.

These are the days of multiple sensors. ImmersiveLabs is using a cam to detect your face and your sex. Your Iphone can send information about your walking style or your attitude. Your game console can send information about your training style or the what do you like to play. Your tv will soon send information about your entertainment needs. Facebook knows every web page you Like.

How many other ad sensors are there out in the world?

Gianni!!

Tuesday, September 14, 2010

Bing is the second one in US according to Nielsen

For the month, Bing garnered 13.9% of all U.S. search traffic, up from 13.6% in July, according to Nielsen. Yahoo's share over the same period declined from 14.6% to 13.1%. Google, meanwhile, made a slight gain, as its share increased from 64.2% in July to 65% in August.

Monday, September 13, 2010

Why Facebook should work on a social graph based e-bay engine

I believe that Facebook should work on Ebay 2.0, the social graph evolution of the traditional auction market.

On Ebay, a user can rank another user according to the quality of the transaction they concluded. Usually, each user take a look to the seller's ranking for assessing the potential risk of a bet. Even if each user is identified with a login and a password, the seller and the bidder do not know each others.

Facebook and the social graph will add an additional level of trust since both the seller and the bidder can identify a path of users that connect both of them and can provide references. Linkedin adopted a similar mechanism for introducing each others unknown persons. Facebook can use it for creating a new Ebay. In alternative, they can buy a competitor and integrate it in the social graph.

On Ebay, a user can rank another user according to the quality of the transaction they concluded. Usually, each user take a look to the seller's ranking for assessing the potential risk of a bet. Even if each user is identified with a login and a password, the seller and the bidder do not know each others.

Facebook and the social graph will add an additional level of trust since both the seller and the bidder can identify a path of users that connect both of them and can provide references. Linkedin adopted a similar mechanism for introducing each others unknown persons. Facebook can use it for creating a new Ebay. In alternative, they can buy a competitor and integrate it in the social graph.

Sunday, September 12, 2010

count the frogs

You have a pond with frogs. Count the frogs.

Saturday, September 11, 2010

Chocolates and roses

You have 21 chocolates and 91 roses, how many bouquets can you make without discarding neither a rose nor a chocolate. All the bouquets must have the same number of chocolates and roses for you.

Wednesday, September 8, 2010

Instant Search

Google just launched a new form of Instant search. I have a couple of questions for the academic people or maths lovers:

- Estimate what is the percentage of your query log that can you cover with say X millions queries. Say that this is p%;

- Estimate what is the average lenght of a query;

- Evaluate whether would be better to leverage traditional inverted lists or tries;

- Estimate the impact of caching and your communication framework;

Cosmos Massive computations in Microsoft (comparison with Map Reduce, Hadoop and Skeleton Programming)

Map & Reduce is a distributed computation paradigm made popular by Google. Programs written in this functional style are automatically parallelized and executed on a large cluster of commodity machines. Many people believes that Map&Reduce has been inspired by the traditional fold and reduce operation typically adopted in functional programming. I believe that there are additional similarities with the less popular skeleton programming paradigm (the equivalent of design patterns for parallel computation). In fact, Map&Reduce can be considered as a particular type of farm, where the collector/reducers are guaranteed to receive the data in a sorted-by-key order. Both Map&Reduce and Skeleton Programming, delegate to the compiler the task of mapping the expressed computation on the top of the execution nodes. In addition, both of them hide the boring data marshaling/un-marshaling operations. Some people pointed out that one limit of Map&Reduce is the difficulty to express more complex type of computations, such as loops or pipes. In general, those operations are emulated by adopting some an external program that coordinates the execution of several map&reduce sequential stages. Within a given map&reduce stage the system can exploit parallelism, but different stages are executed in sequence.

The adoption of SQL-like interfaces is one interesting extension to Map&Reduce. SQL-like statements allow to express parallel computations with no need to write code in a specific programming language (Java, C++ or whatever). These extensions has been originally inspired by Google's Sawzall and they have been made popular by Yahoo's Hadoop Pig and by Facebook's Hadoop Hive. All those extensions are very useful syntactic sugar, but they do no extend the Map&Reduce model.

Scope (Structured Computations Optimized for Parallel Execution) is a new declarative and extensible scripting language targeted massive data analysis. Quoting the paper "Scope: Easy and Efficient Parallel Processing of Massive Data Sets": However, this model (Map&Reduce) has its own set of limitations. Users are forced to map their applications to the map-reduce model in order to achieve parallelism. For some applications this mapping is very unnatural. Users have to provide implementations for the map and reduce functions, even for simple operations like projection and selection. Such custom code is error-prone and hardly reusable. Moreover, for complex applications that require multiple stages of map-reduce, there are often many valid evaluation strategies and execution orders. Having users implement (potentially multiple) map and reduce functions is equivalent to asking users specify physical execution plans directly in database systems. The user plans may be suboptimal and lead to performance degradation by orders of magnitude"

In other words, you can leverage loops, pipes and many other types of parallel patterns with NO need of emulating those steps as in pure Map&Reduce. The process is transparent to the user since she can just express a collection of SQL-like statements (a "Script") and the compiler will generate the more appropriate parallel execution patterns. For instance, (Example 3, in the article)

Scope runs on the top of Cosmos storage system. "The Cosmos Storage System is an append-only file system that reliably stores petabytes of data. The system is optimized for large sequential I/O. All writes are append-only and concurrent writers are serialized by the system. Data is distributed and replicated for fault tolerance and compressed to save storage and increase I/O throughput."

The language Scope supports:

Note that the users need to know neither the optimal mapping nor the optimal number of processes allocated for a particular SCOPE script execution. Everything is transparently computed by the SCOPE compiler and mapped on the top of a well defined set of "parallel design patterns". Dryad provides the basic primitives for SCOPE execution and Cosmos job allocation. Please refer this page if you are interested in more information about Dryad, or if you want to download the academic release of DryadLINQ.

This power of expression allows to express massive computations with very little SQL-like scripting (again from the above paper):

Cosmos and Scope are an important part of the Bing & Hotmail systems, as described in "Server Engineering Insights for Large-Scale Online Services, an in-depth analysis of three very large-scale production Microsoft services: Hotmail, Cosmos, and Bing that together capture a wide range of characteristics of online services

The adoption of SQL-like interfaces is one interesting extension to Map&Reduce. SQL-like statements allow to express parallel computations with no need to write code in a specific programming language (Java, C++ or whatever). These extensions has been originally inspired by Google's Sawzall and they have been made popular by Yahoo's Hadoop Pig and by Facebook's Hadoop Hive. All those extensions are very useful syntactic sugar, but they do no extend the Map&Reduce model.

Scope (Structured Computations Optimized for Parallel Execution) is a new declarative and extensible scripting language targeted massive data analysis. Quoting the paper "Scope: Easy and Efficient Parallel Processing of Massive Data Sets": However, this model (Map&Reduce) has its own set of limitations. Users are forced to map their applications to the map-reduce model in order to achieve parallelism. For some applications this mapping is very unnatural. Users have to provide implementations for the map and reduce functions, even for simple operations like projection and selection. Such custom code is error-prone and hardly reusable. Moreover, for complex applications that require multiple stages of map-reduce, there are often many valid evaluation strategies and execution orders. Having users implement (potentially multiple) map and reduce functions is equivalent to asking users specify physical execution plans directly in database systems. The user plans may be suboptimal and lead to performance degradation by orders of magnitude"

In other words, you can leverage loops, pipes and many other types of parallel patterns with NO need of emulating those steps as in pure Map&Reduce. The process is transparent to the user since she can just express a collection of SQL-like statements (a "Script") and the compiler will generate the more appropriate parallel execution patterns. For instance, (Example 3, in the article)

R1 = SELECT A+C AS ac, B.Trim() AS B1where A, B, C are colums in a SQL-like schema, and particular StringOccurs C# string function is used to filter the column C. This example shows how to write a user-defined function in scope.

FROM R WHERE StringOccurs(C, “xyz”) > 2

#CS public static int StringOccurs(string str, string ptrn)

{ int cnt=0; int pos=-1;

while (pos+1 < str.Length) {

pos = str.IndexOf(ptrn, pos+1);

if (pos < 0) break;

cnt++;

}

return cnt;

}

#ENDCS

Scope runs on the top of Cosmos storage system. "The Cosmos Storage System is an append-only file system that reliably stores petabytes of data. The system is optimized for large sequential I/O. All writes are append-only and concurrent writers are serialized by the system. Data is distributed and replicated for fault tolerance and compressed to save storage and increase I/O throughput."

The language Scope supports:

- Join: SQL-like;

- Select: SQL-like;

- Reduce: "it takes as input a rowset that has been grouped on the grouping columns specified in the ON clause, processes each group, and outputs zero, one or multiple rows per group";

- Process: "it takes a rowset as input, processes each row in turn, and outputs a sequence of rows";

- Combine: "it takes two input rowsets, combines them in some way, and outputs a sequence of rows";

Note that the users need to know neither the optimal mapping nor the optimal number of processes allocated for a particular SCOPE script execution. Everything is transparently computed by the SCOPE compiler and mapped on the top of a well defined set of "parallel design patterns". Dryad provides the basic primitives for SCOPE execution and Cosmos job allocation. Please refer this page if you are interested in more information about Dryad, or if you want to download the academic release of DryadLINQ.

This power of expression allows to express massive computations with very little SQL-like scripting (again from the above paper):

SELECT query, COUNT() AS count FROM "search.log"Here petabytes (and more!) of searchlogs are stored on the distributed Cosmos storage and processed in parallel to mine the one with at least 1000 "counts". Another example, this time with joins:

USING LogExtractor GROUP BY query

HAVING count > 1000

ORDER BY count DESC;

OUTPUT TO "qcount.result";

SUPPLIER = EXTRACT s_suppkey,s_name, s_address, s_nationkey, s_phone, s_acctbal, s_commen FROM "supplier.tbl" USING SupplierExtractor;

PARTSUPP = EXTRACT ps_partkey, ps_suppkey, ps_supplycost FROM "partsupp.tbl" USING PartSuppExtractor;

PART = EXTRACT p_partkey, p_mfgr FROM “part.tbl" USING PartExtractor;

// Join region, nation, and, supplier

// (Retain only the key of supplier)

RNS_JOIN = SELECT s_suppkey, n_name FROM region, nation, supplier WHERE r_regionkey == n_regionkey AND n_nationkey == s_nationkey;

// Now join in part and partsupp

RNSPS_JOIN = SELECT p_partkey, ps_supplycost, ps_suppkey, p_mfgr, n_name FROM part, partsupp, rns_join WHERE p_partkey == ps_partkey AND s_suppkey == ps_suppkey;

// Finish subquery so we get the min costs

SUBQ = SELECT p_partkey AS subq_partkey, MIN(ps_supplycost) AS min_cost FROM rnsps_join GROUP BY p_partkey;

// Finish computation of main query

// (Join with subquery and join with supplier

// again to get the required output columns)

RESULT = SELECT s_acctbal, s_name, p_partkey, p_mfgr, s_address, s_phone, s_comment FROM rnsps_join AS lo, subq AS sq, supplier AS s WHERE lo.p_partkey == sq.subq_partkey AND lo.ps_supplycost == min_cost AND lo.ps_suppkey == s.s_suppkey ORDER BY acctbal DESC, n_name, s_name, partkey;

// output result OUTPUT RESULT TO "tpchQ2.tbl";

Cosmos and Scope are an important part of the Bing & Hotmail systems, as described in "Server Engineering Insights for Large-Scale Online Services, an in-depth analysis of three very large-scale production Microsoft services: Hotmail, Cosmos, and Bing that together capture a wide range of characteristics of online services

Wired, Is the Web really dead? Reading numbers the way you like

Wired published an article, "The Web Is Dead. Long Live the Internet", containing this enclosed image.

Wired published an article, "The Web Is Dead. Long Live the Internet", containing this enclosed image.According to the authors, the whole Web model is "dead" since this type of traffic is progressively reducing. App is the new rising start.

Many people expressed perplexity about the relation between the graph (traffic on internet) and the conclusion of the paper “Web is dead . Long live to

In more details, the fundamental misleading assumption is to consider the relative percentage growth instead of the absolute growth. Quoting Boing Boing: “In fact, between 1995 and 2006, the total amount of web traffic went from about 10 terabytes a month to 1,000,000 terabytes (or 1 exabyte). According to Cisco, the same source Wired used for its projections, total internet traffic rose then from about 1 exabyte to 7 exabytes between 2005 and 2010.”

The Web traffic had a huge increase but this increase has been relatively smaller when compared to video traffic. Probably this is also due to the data dimension of each video ;-). Same consideration for email, dns, etc.

Another misleading assumption is the definition of Video. Cisco created a such category which includes things like (Skype) video calls, Netflix, but ALSO youtube & hulu web traffic. It is questionable if this definition is correct. Perhaps, Youtube video traffic should be legitimately considered part of the Web traffic because this is a type of Web browser traffic.

Tuesday, September 7, 2010

Longest non decreasing subsequence

The problem of Longest increasing sub-sequence is defined and solved here. Starting from that, can you solve the following: Find out Non-Decreasing subsequence of length k with maximum sum in an array of n integers ?

Monday, September 6, 2010

sum to 0

Given a list of n integers (negative and positive), not sorted and duplicates allowed, you have to output the triplets which sum to 0

Sunday, September 5, 2010

Number of 1 in a sequence

Compute the number of sequences of n binary digit that do NOT contain consecutive 1 symbols.

Saturday, September 4, 2010

Partition an array

You have and array with numbers only in 0-9. You have to divide it into two array such that sum of elements in each array is same.

Friday, September 3, 2010

How Facebook works?

After the posting about Google (WSDM09) an interesting talk about how things are done @ Facebook . A lot of open source including Mysql, CFengine (for configuration), Thrift (for RPC), Hadoop (for MapReduce and data storage), Scribe (for data migration), Bittorrent (for code deployment, which is crazy but fascinating) , Hive for SQL like map-reduce, Ganglia for Clustering Monitoring, apache, memcached, etc.

Thursday, September 2, 2010

Two beautilful Hadhoop books

Hadoop has become "de-facto" standard for industrial parallel processing. Congrats to Doug who inspired and worked on this project since the very beginning.

Recently, I reviewed two beautiful books that I would suggest for your education

Recently, I reviewed two beautiful books that I would suggest for your education

- Data-Intensive Text Processing with MapReduce which focuses on how to think and design algorithms in "MapReduce", with an emphasis on text processing algorithms common in natural language processing, information retrieval, and machine learning.

- Hadoop: The Definitive Guide with an emphasis on how to program a large hadoop cluster, and with real examples of industrial use in large organizations such as Last.Fm, Facebook and other.

Google and AOL renewed the contract

Nothing new, this was expected. "Mr Armstrong, who took over as the company’s top executive last year, has vowed to restructure the once formidable internet service provider into one of the internet’s biggest content producers"

How many search providers are still out of there?

How many search providers are still out of there?

Different approaches to Universal Search.

Universal search is about having an etherogenous set of vertical retrieval systems (Web, Image, Video, News, Books, etc) which are collaborating together for retrieving the right set of etherogeneous results. There are three approaches:

- Having a set of classifiers, one for each domain that will guess whether that particular domain will have a set of relevant results for the given query. The pros is that you would avoid to send all the queries to all the verticals. The cons is that you will best guess the validity of the results, since you are not sending the real query to the vertical. This is Google pre-2004

- You have an infrastructure good enough to support Web-like traffic in all the different verticals. This is google post 2004. Note that this is a pull approach

- Another solution, not adopted by Google , is to push set of relevant queries from the vertical to the Query distributor. Consider that each vertical knows, at every given instant of time, the type of queries he can serve with good enough quality.

Wednesday, September 1, 2010

Hive and the SQL-Like fashion

Hive is a nice SQL like interface on the top of Hadoop, an open source platform realized by Doug Coutting. More and more the database is used for non online transactions.

Fulcrum was the first time I saw a SQL-like interface for retrieval back in 1996, now it's funny to see the Map/Reduce paradigm expressed in SQL-like as in

Fulcrum was the first time I saw a SQL-like interface for retrieval back in 1996, now it's funny to see the Map/Reduce paradigm expressed in SQL-like as in

FROM (

FROM pv_users

MAP pv_users.userid, pv_users.date

USING 'map_script'

AS dt, uid

CLUSTER BY dt) map_output

INSERT OVERWRITE TABLE pv_users_reduced

REDUCE map_output.dt, map_output.uid

USING 'reduce_script'

AS date, count;

Subscribe to:

Posts (Atom)